AI Compliance Guide for Financial Advisory Firms

What financial advisors need to know about AI compliance: SEC, FINRA, state rules & more.

.avif)

AI Compliance Guide for Financial Advisory Firms

Many financial advisors today use AI tools for meeting notes, client email drafts, or research. But compliance often becomes an afterthought.

The SEC added AI to its 2025 exam priorities, and FINRA's 2026 Oversight Report introduced a dedicated new section on generative AI, covering governance, recordkeeping, and autonomous agents. The White House's March 2026 National AI Policy Framework reinforced this direction, explicitly favoring existing sector-specific regulators over a new federal AI body.

In practice, that means the SEC and FINRA are using their existing authority to police AI outcomes right now.

This AI Compliance Guide for Financial Advisory Firms covers what financial advisors need to know about the regulatory landscape, key risk areas, governance frameworks, and how to evaluate financial services AI tools before connecting them to client data.

Key Takeaways

- No new AI-specific federal regulations have been enacted as of early 2026. The SEC and FINRA are applying existing rules on supervision, recordkeeping, communications, fiduciary duty, and marketing to AI use.

- The SEC has already penalized advisors $400,000 combined for overstating AI use. Accurate disclosures are a live enforcement priority.

- Building a basic AI compliance framework is achievable for any practice. Start with an AI use-case inventory, a written compliance policy, and a vendor due diligence file for each tool used with client data.

The Current Regulatory Landscape: What Advisors Need to Know

Three layers of regulation apply: federal securities law enforced by the SEC, FINRA Rules for Broker-Dealer-affiliated advisors, and state-level regulations for state-registered RIA (Registered Investment Advisor) firms.

The SEC's Position: Existing Rules Apply

The SEC has not enacted AI-specific regulations for investment advisers as of early 2026. It applies the Investment Advisers Act of 1940 to AI use, covering fiduciary standards, marketing, recordkeeping, and supervisory procedures.

In March 2024, the SEC settled charges against Delphia and Global Predictions for making false and misleading statements about their use of AI. Delphia paid $225,000. Global Predictions paid $175,000. This brings the total civil penalties to $400,000 for these two companies alone.

Every AI-related claim in your Form ADV, marketing, or client communications must be accurate and defensible.

FINRA's Position: Technology-Neutral Rules

FINRA Regulatory Notice 24-09, issued June 2024, confirmed that FINRA's rules apply equally whether advisors use AI or any other technology. Recordkeeping, customer data protection, risk management, and Regulation Best Interest (Reg BI) all apply.

FINRA Rule 3110 requires firms to establish supervisory systems reasonably designed to achieve compliance.

State Regulators: A Layer Advisors Often Overlook

Federal preemption of state AI laws remains a point of intense debate in Congress, leaving advisors to navigate a patchwork of state-level privacy requirements in California (CCPA), Colorado (CPA), and others.

Check with your state securities administrator and legal counsel for requirements specific to your registration.

Key AI Compliance Risk Areas for Individual Advisors

These are the areas regulators are actively examining:

1. Marketing and Client Communications

Every AI-related claim on your website, marketing, pitch materials, or ADV filings must be accurate, substantiated, and not misleading. Confirm you can document exactly how each tool is used and that your description matches reality.

2. Recordkeeping

When AI generates meeting summaries, drafts emails, or produces investment research, these outputs may qualify as records that require retention. But in 2025, the SEC clarified that AI prompts (the actual instructions you feed the model) can also fall under books-and-records obligations if they lead to an investment recommendation. That means you need to archive both the input and the output.

Confirm your records management system captures AI-generated content and the prompts behind any client-facing advice, and keep audit-ready documentation of model usage and any changes made over time.

3. Client Data Privacy and Confidentiality

Regulation S-P requires written policies and procedures to protect customer records. Any practice that genuinely protects client data privacy in AI-enabled advisory processes treats this as a verifiable feature of every tool it adopts.

Before connecting any tool to live client data, verify:

- Does the vendor train models on your inputs?

- Where is data stored?

- Does the agreement include confidentiality provisions?

4. Supervision and Oversight

Every AI output requires human review before use. AI can hallucinate and fabricate information, so require sources to be cited, verified, and retained. Monitor AI systems continuously for performance degradation or drift. Validate AI model outputs before deployment in client-facing workflows. Liability cannot be delegated to the tool.

5. Conflicts of Interest and Fiduciary Duty

AI tools that generate investment recommendations can introduce conflicts of interest. A robo-advisor platform built by a product manufacturer could systematically favor that manufacturer's products.

Make sure AI-generated recommendations meet fiduciary standards and that AI tools do not violate suitability or best interest obligations. Disclose relevant conflicts in your Form ADV.

How to Build a Basic AI Governance Framework

AI governance is a structured way to decide which tools to use, how to use them responsibly, and how to document the process. A well-built framework bridges the gap between technology innovation and compliance obligations.

Step 1. Inventory Your Current AI Use

Identify and document all AI use cases across the firm. List every AI tool in use, including tools not explicitly labeled AI. This includes CRMs with AI features, transcription tools, robo-advisor platforms, research tools, and compliance-monitoring systems.

Step 2. Classify Use Cases by Risk Level

Classify each use case by compliance risk. AI-generated client recommendations or communications accessing sensitive client data require more documentation and human review.

Capture this in an AI Risk Register. Include a review of whether each client-facing tool detects bias and discrimination in AI-driven client outputs as part of your classification process.

Step 3. Conduct Vendor Due Diligence

Manage third-party AI vendor risk through due diligence protocols. Review terms of service, privacy policies, and data handling practices for each third-party AI provider before using their tool with client data. Build a vendor-due-diligence file for each tool. For broker-dealer-affiliated advisors, verify firm approval first.

Step 4. Document Your AI Policies

Create a written Compliance Policy describing which tools you use, how they are used, what data can be inputted, how outputs are reviewed, and how disclosures are made to clients.

A one-page policy demonstrates intentional governance and creates a starting point for any examination. Pair it with model governance documentation that describes each tool's purpose, data sources, and review process.

Step 5. Review and Update Disclosures

Align AI deployment with SEC and FINRA regulatory requirements. Revisit your Form ADV and client-facing marketing every time you add a new tool. If you use AI for meeting notes but not investment decisions, say exactly that. Always keep disclosures current.

Step 6. Stay Current

Regularly train compliance staff on AI-specific regulatory obligations. Consistently monitor SEC and FINRA guidance as well. This prepares you for evolving AI-specific regulatory guidance.

How to Evaluate an AI Tool for Compliance Readiness

The burden of due diligence falls on you:

- Data handling. Confirm the vendor does not train models on client inputs and verify US-based data storage.

- Security standards. Look for SOC 2 Type 2 certification and alignment with a recognized cybersecurity framework.

- Transparency of AI outputs. Prioritize tools that help you maintain explainability of AI decisions (like those that produce Explainable AI (XAI) outputs) for regulatory review.

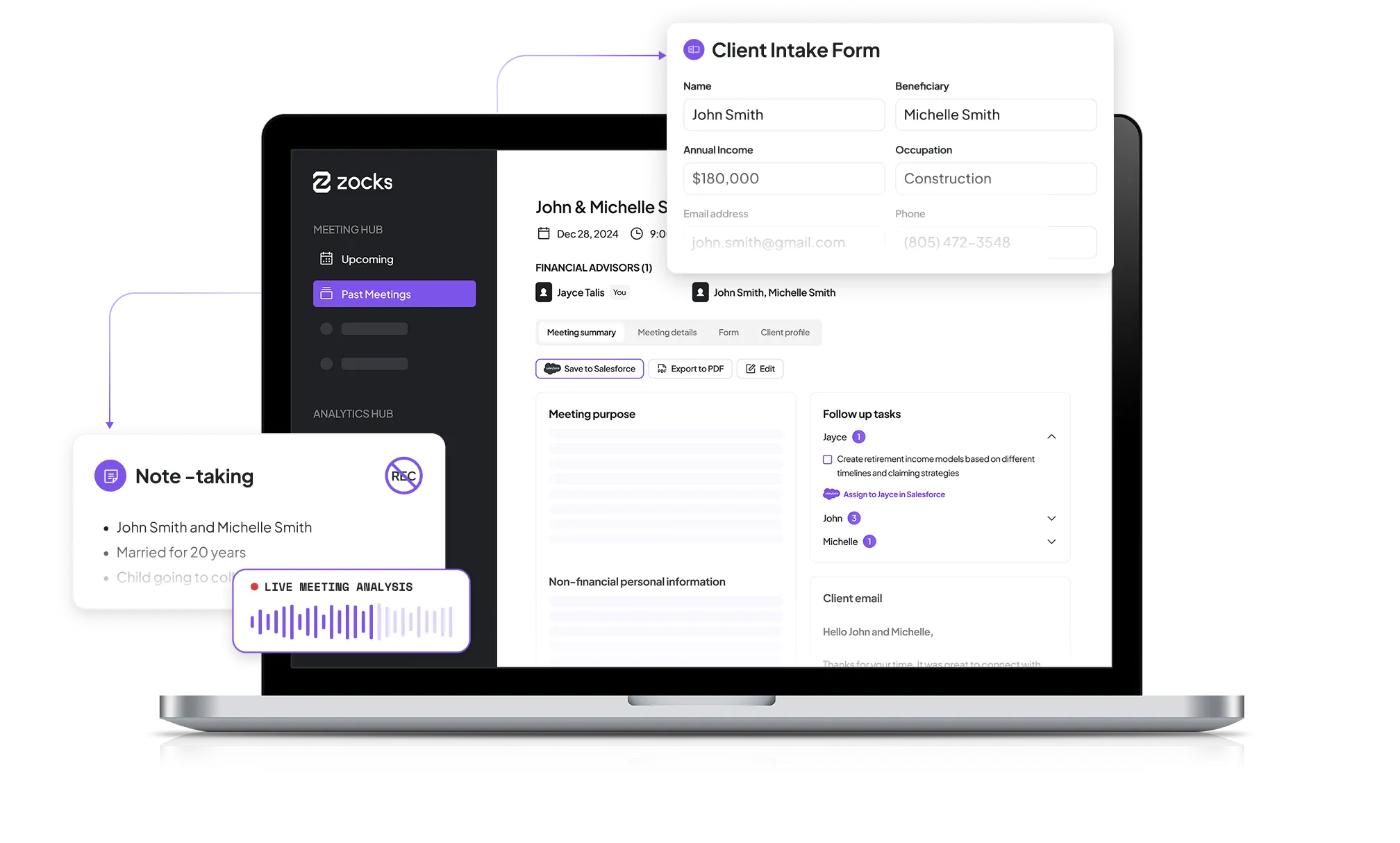

- Financial services experience. Purpose-built tools like Zocks understand the regulatory context in which you operate. Zocks includes 100% no-audio recording, configurable PII redaction, consent management, and enterprise-grade hierarchical controls. Explore Zocks’ privacy features.

- Broker-dealer approval. Verify your firm has approved the tool before adoption.

Practical Dos and Don'ts for AI Compliance

Treat AI adoption as a compliance decision right from the start. Follow these guidelines:

Do:

- Accurately describe your AI use in all marketing, disclosures, and ADV filings. Update them promptly whenever your practices change.

- Review every AI-generated output, including meeting notes, client emails, action items, and recommendations, before using it. The advisor will always be responsible for the output.

- Read the terms of service of any AI tool before inputting client data. Know where that data goes and how it is used.

- Document your AI practices in a written compliance policy. A well-maintained audit trail is your protection during a regulatory examination.

- Consult your CCO, broker-dealer, or legal counsel before adopting any tool that touches client data or client communications.

- Build a compliance training program that covers your firm's supervisory procedures for AI. Doing this consistently reduces the risk of regulatory examinations through proactive AI oversight.

Avoid:

- Claiming to use AI in your marketing if you do not, or overstating the sophistication of the AI you use. This is exactly the conduct the SEC has prosecuted.

- Assuming a vendor's financial services positioning satisfies your specific compliance obligations. Validate independently.

- Inputting real client names, account numbers, or sensitive financial details into consumer AI tools without verifying the vendor's data handling practices.

Read more: How to turn regulatory challenges into operational practices

Essential Regulatory Resources for Advisor AI Compliance

Here are primary sources where you can regularly monitor for AI compliance:

- SEC 2025 Examination Priorities: If you integrate AI into portfolio management, trading, marketing, or compliance, examiners may review your AI-related policies, procedures, and client disclosures.

- FINRA Regulatory Notice 24-09: The foundational document for Broker-Dealer-affiliated advisors. Clarifies that supervision, recordkeeping, and communication standards all apply to AI tools.

- 2026 FINRA Annual Oversight Report: FINRA's existing rules and securities laws that apply to firms using generative AI.

- NIST AI Risk Management Framework: A voluntary, risk-based structure for building a scalable internal AI Risk Register and Model Governance Documentation. Useful for any practice building governance from scratch.

- NASAA: The coordination body for state securities administrators. They actively monitor AI-enabled fraud and misrepresentation concerns at the state level.

For concerns regarding AI compliance, check out our webinar on compliance best practices for financial professionals.

Key Metrics and Questions to Track for Ongoing AI Compliance

AI compliance is an ongoing obligation in practice management. Revisit these questions at a minimum annually, and whenever you add a new AI tool to your workflow.

- Accuracy of disclosures. Do your ADV and marketing materials still accurately reflect your current AI use? Has your AI tool usage changed since your last review?

- Vendor review. Have vendor terms or data handling practices changed? Update your Vendor Due Diligence File whenever they do.

- Output review discipline. Are you and your team consistently reviewing AI-generated outputs before use? An internal auditor can periodically verify that this process is being followed.

- Regulatory updates. Has the SEC, FINRA, or your state securities administrator issued new AI guidance since your last review? Quarterly monitoring of regulatory feeds is a practical baseline.

- Client complaints or questions. Have any clients raised concerns about how you use AI in your practice? These signals feed directly into your AI Risk Register and Compliance Policy updates.

Frequently Asked Questions

Are there specific AI regulations for financial advisors?

There is no federal law exclusively for AI in financial advisory as of early 2026. The Investment Advisers Act of 1940, Regulation Best Interest (Reg BI), FINRA Rules, and state privacy laws apply to how you use AI today.

What is "AI washing" and why does it matter for advisors?

AI washing is the misleading exaggeration of AI capabilities in marketing or disclosures. In fact, the SEC penalized Delphia and Global Predictions a combined $400,000 for it in 2024. It creates regulatory risk, reputational damage, and breaches client trust.

Can I use a general-purpose AI tool like Claude or ChatGPT with client data?

You can. Many AI platforms, including Zocks, are building connectors (called Model Context Protocol) to safely use general-purpose AI tools. But you should do so only after verifying the vendor's data-handling practices, confirming that no model training is performed on your inputs, and checking whether your broker-dealer has approved the tool. Consumer AI tools are not built for compliance with the financial services regulation.

Do FINRA rules apply to the AI I use in my practice?

Yes. Regulatory Notice 24-09 makes this explicit. Supervision, recordkeeping, and communication standards apply to all AI-assisted activities.

How often should I review my AI compliance practices?

At a minimum, annually, and every time you add a new tool. Review your AI Use Case Inventory, Compliance Policy, vendor terms, and disclosures at each cycle.

Ask AI About this Topic

ChatGPT | Claude | Perplexity | Grok | Google AI Mode

This guide is for educational and informational purposes only. It does not constitute legal or compliance advice. Regulatory requirements vary based on your registration type, state of operation, broker-dealer affiliation, and individual circumstances. Consult your compliance officer, legal counsel, or a qualified regulatory professional before making compliance decisions.

Related blogs

Get started for free in less than 10 minutes